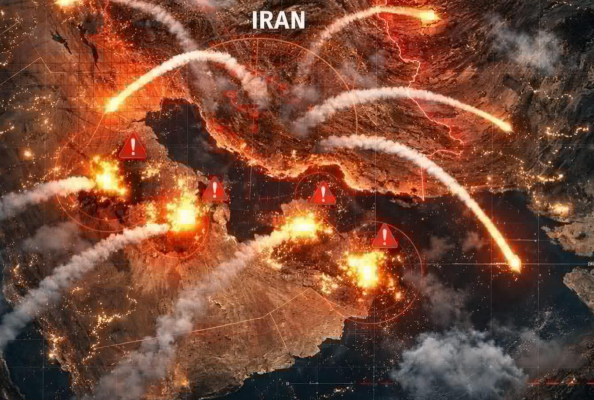

“🔴 BREAKING NEWS… 6 countries join forces to attac… See more.”

It spread fast—faster than most people could even process it. Within minutes, it was trending across social platforms, reposted with urgency, fear, and speculation. The unfinished word—“attac…”—was enough to trigger alarm. People filled in the rest automatically: attack.

Attack where?

Attack whom?

Attack why?

No one seemed to know. And yet, reactions poured in as if the event had already happened.

Some users claimed it was the beginning of a global conflict. Others insisted it was tied to ongoing tensions involving Russia and Ukraine. A few speculated about rising instability in the Middle East, pointing toward Iran or Israel. Names of powerful nations were thrown into the mix without evidence—China, United States—as if saying them made the story more real.

But beneath all the noise, there was one critical problem:

No credible source confirmed anything.

No official statements.

No verified reports.

No reliable journalism backing the claim.

It was just a headline fragment—engineered to provoke, not inform.

Still, the psychology behind it was powerful.

When people see “BREAKING NEWS” paired with something that looks like a global threat, the brain reacts instantly. It doesn’t wait for details. It doesn’t ask for context. It jumps straight to survival mode—seeking information, sharing alerts, warning others.

That’s exactly what happened.

Group chats lit up. Families messaged each other. Some people even began preparing for worst-case scenarios, despite having no concrete information about what was supposedly happening.

Meanwhile, actual newsrooms remained quiet.

Major outlets didn’t report a six-country military alliance launching an attack. Analysts didn’t issue warnings. Governments didn’t activate emergency communication channels.

Because there was nothing to report.

Eventually, digital investigators and fact-checkers began tracing the origin of the headline. Like many viral posts, it didn’t come from a credible newsroom. It came from a low-quality content site designed to generate clicks through ambiguity and fear.

The phrase “6 countries join forces” wasn’t entirely fabricated—it had been lifted from a completely different context.

In reality, it referred to a joint military exercise.

Training.

Coordination.

Preparation—not aggression.

Military exercises involving multiple countries happen all the time. They’re often planned months in advance and publicly announced. They’re meant to strengthen cooperation, improve readiness, and send strategic signals—but they are not the same as launching an attack.

Somewhere along the way, that context was stripped away.

What remained was a half-finished sentence.

And that was enough.

By the time clarifications started circulating, millions had already seen the original version. And as always, the correction traveled slower than the rumor.

That’s one of the defining challenges of modern information:

False or misleading content spreads fast because it’s designed to trigger emotion.

Accurate information spreads slower because it requires explanation.

Later that day, a defense analyst commented on the situation during a live discussion.

“This is a textbook example of how incomplete information can escalate fear,” he said. “You don’t need to lie if you can imply something alarming and let people fill in the gaps.”

That idea stuck.

Because the headline didn’t technically say anything false.

It just stopped at the most provocative moment.

“6 countries join forces to attac…”

The reader does the rest.

They imagine conflict.

They assume urgency.

They react emotionally.

And in doing so, they become part of the spread.

By the evening, more grounded explanations began to take hold. Articles clarified that multinational exercises were underway in various regions, involving cooperation between allied nations. No attacks had been launched. No sudden war had begun.

The world, in other words, was not ending.

But the episode left an impression.

It showed how fragile the line is between information and interpretation. How easily a missing word or incomplete sentence can reshape reality in people’s minds.

It also raised a deeper question:

Why are we so quick to believe the worst?

Part of it is human nature. We’re wired to pay attention to threats. It’s a survival instinct. But in a digital world where anyone can publish anything, that instinct can be exploited.

And it often is.

The next time a headline like that appears—cut off, urgent, demanding attention—it’s worth pausing for a moment.

Ask:

Who is reporting this?

Where is the full context?

Is there confirmation from reliable sources?

Because in many cases, the truth isn’t hidden.

It’s just incomplete.

And sometimes, the difference between panic and understanding comes down to a single missing word.

In this case, that word wasn’t “attack.”